For the last three years, the AI industry has been caught in a “Parameter Arms Race.” Experts in Silicon Valley believed that to reach human-level reasoning—the ability to plan, double-check logic, and solve complex math problems—a model needed at least 100 billion parameters and enough electricity for a small city.

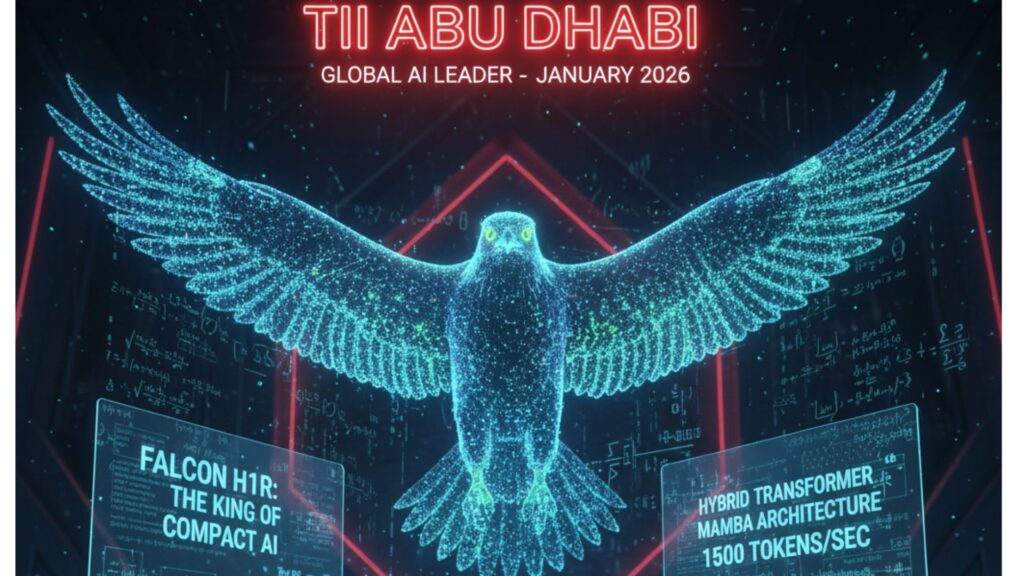

The Architectural Revolution That Put “Elite Reasoning” in Your Pocket Falcon H1R 7B: The Architectural Revolution That Put “Elite Reasoning” in Your Pocket

On January 5, 2026, that belief was turned on its head.

Falcon H1R 7B

The Technology Innovation Institute (TII) in Abu Dhabi officially launched Falcon H1R 7B, the “Reasoning” version of their high-performance H1 family. With only 7 billion parameters, this model has achieved results that were once only possible for “frontier” models. It doesn’t just predict the next word; it actively thinks through problems.

The Engineering Breakthrough: Hybrid Transformer-Mamba Architecture

The most important takeaway from the H1R release is its unique “skeleton.” Most modern AI, including Llama and GPT series, uses a “Pure Transformer” architecture. While these are powerful, Transformers have a major downside: they are “Quadratic.” As conversations lengthen, the computational power needed increases exponentially, leading to “RAM bloat” and significant slowdowns.

The Hybrid Solution

Falcon H1R 7B uses a Parallel Hybrid Mixer Block. This combines two different technologies:

Transformer Attention: The “eyes” of the AI. It lets the model focus on specific, crucial details in a long document with complete clarity.

Mamba (State Space Model): The “lungs” of the AI. Mamba is a newer architecture that processes information in a linear way. It is extremely fast and uses very little memory, even when reading a 1,000-page book.

By running these two together, the Falcon H1R 7B gains the in-depth analytical focus of a Transformer along with the incredible speed of Mamba. This is why it can handle a massive 256K Context Window (about 400 pages of text) while being nearly twice as fast as its competitors.

Benchmarking the Impossible: 7B vs. The Giants

The performance data released today by TII’s Chief Researcher, Dr. Hakim Hacid, shows the model setting a new “Pareto Frontier”—the point where better performance no longer requires more cost or size.

- The “Math King” Status

The toughest test for any AI is the AIME-24 (American Invitational Mathematics Examination). It is a “cold-start” reasoning test where guessing is not an option.

Falcon H1R 7B Score: 88.1%

ServiceNow Apriel 1.5 (15B): 86.2%

Alibaba Qwen3 (32B): 63.6%

NVIDIA Nemotron H (47B): 49.7%

Despite being much smaller, the Falcon H1R 7B outperformed the 32B and 47B models by a significant margin. This suggests that TII has figured out how to compress “logic” instead of just “information.”

- Coding and Agentic Performance

In the LCB v6 (LiveCodeBench), which tests the AI’s ability to write functional software code, Falcon H1R 7B scored 68.6%. This is the highest score ever for any model with less than 8 billion parameters. It even surpassed the much larger Qwen3-32B (33.4%), making it the top choice for developers who want a coding assistant running on their own machines. - Efficiency and Throughput

Thanks to the Mamba integration, the model achieved 1,500 tokens per second on a single high-end GPU. For context, most 7B models struggle to exceed 600–800 tokens per second. This makes the H1R 7B the most scalable model for businesses that need to handle millions of customer queries without building new data centers.

Deep Think with Confidence (DeepConf)

One of the most innovative features mentioned in the technical report is DeepConf.

In 2024 and 2025, “Reasoning Models” (like OpenAI’s o1) became popular for their “Chain of Thought” output—showing the AI’s internal dialogue. However, those models often “hallucinated” or got stuck in logical loops.

TII addressed this with DeepConf, a confidence-aware filtering system. During the reasoning phase, the model creates several internal “logical traces.” If it detects that a specific reasoning path leads to a contradiction, it “discards” that trace and shifts to a more confident one before typing the answer for the user. This results in fewer errors in science, law, and medical queries.

Sovereign AI and the Multilingual Edge

The Falcon H1R 7B is not just focused on Western users. It was trained in 18 languages, including Arabic, Hindi, Urdu, Russian, and Chinese.

Earlier today, TII also launched Falcon-H1 Arabic, which is now the top-ranked Arabic model on the Open Arabic LLM Leaderboard (OALL). By training on high-quality, culturally aware data from the beginning—rather than just translating English data—the model better understands dialects and local contexts that US-based models often overlook.

The Future of Local AI: Why Size Matters

The most significant impact of the Falcon H1R 7B release is the “democratization of genius.”

Privacy: Because the model has 7B parameters, it can run fully offline on a modern 16GB RAM laptop. You can discuss sensitive medical, legal, or financial data without it leaving your space.

Energy: Running a 7B model requires much less electricity than a 70B or 400B model. This makes the H1R 7B a “Sustainability Leader” in AI.

Accessibility: Developers in areas with limited high-speed internet can now access “GPT-4 level” reasoning by downloading a single 5GB file (quantized).

Final Analysis

The Technology Innovation Institute has set a standard in efficiency. By combining the Transformer-Mamba hybrid architecture with a “Curriculum in Reverse” data approach (teaching the hardest logic first), they have developed the world’s most powerful compact AI.

As we progress through 2026, the Falcon H1R 7B will likely become the “Base Engine” for millions of autonomous agents, coding assistants, and educational tools. It shows that in AI, intelligence is not solely about the number of neurons; it’s about how you apply them.Readmore……..